Your llms.txt Adoption Numbers Are Wrong: How Soft-404s Inflate AI Metrics by 25×

Published 2026-04-20 · PROGEOLAB Research

A soft-404 is a web server configuration that returns HTTP 200 for URL paths that don't exist. Instead of returning 404, the server returns the homepage, a custom branded error page, or a catch-all template — all with a 200 status code. For human visitors this is a usability improvement (no ugly error screen). For AI-standards measurement it's a silent data poisoner. Every scanner that checks whether a file exists by looking at the HTTP status code reads soft-404 responses as real implementations.

The 41% problem

Of 388 Fortune 500 sites that responded to our audit, 160 (41%) serve soft-404 pages for any requested path. We tested this by probing random nonsense paths (/xyzabc, /nonexistent-path-987) and checking whether the response was HTTP 404 or HTTP 200. 160 sites returned 200.

Those 160 sites inflate every AI-standard metric. When a researcher probes them for /llms.txt, they get HTTP 200 with an HTML homepage. When they probe for /ai.txt, same. /agents.json, same. The sites don't have any of these files — but status-code-only scans record them all as implementations.

The inflation numbers

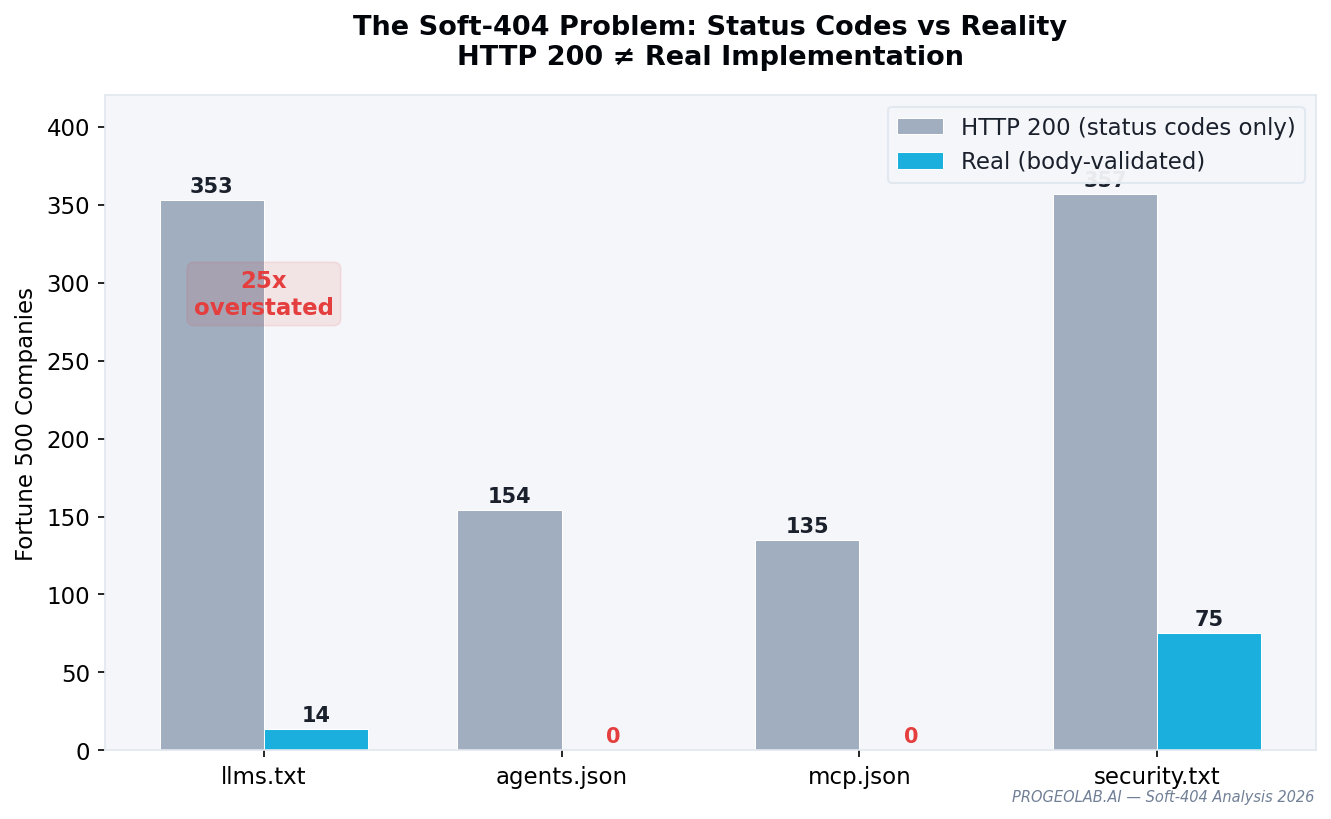

| Standard | HTTP 200 | Real | Inflation |

|---|---|---|---|

| llms.txt | 353 | 14 | 25× |

| ai.txt | 357 | 0 | ∞ |

| agents.json | 329 | 0 | ∞ |

| mcp.json | 298 | 0 | ∞ |

The llms.txt 25× inflation is the most consequential because llms.txt has real implementations. ai.txt, agents.json, and mcp.json have zero, so the entire "adoption" signal from status-code scanners is hallucinated. Any analyst claiming "60%+ of the Fortune 500 has adopted ai.txt" is reading soft-404 pages and counting them.

The body-validation fix

The correct method for measuring AI-standard adoption:

- Probe a known-nonexistent URL first.

/.well-known/xyzabc-probe-978or similar. Record the response body's MD5 hash. This is the soft-404 signature. - Probe the target path. Record the response body's MD5 hash.

- Compare hashes. If identical, the site is serving soft-404 — the target file doesn't exist. If different, inspect the body for standard-specific markers (e.g. "# " prefix for llms.txt, JSON Schema validation for agents.json).

This adds one probe per site and eliminates 10-25× over-reporting. Any serious measurement of AI-standard adoption should implement it. The llms.txt adoption data, the security.txt data, and the emerging-standards data in this corpus all use this methodology.