WAF vs AI: The Three Layers of Fortune 500 AI Blocking

Published 2026-04-20 · PROGEOLAB Research

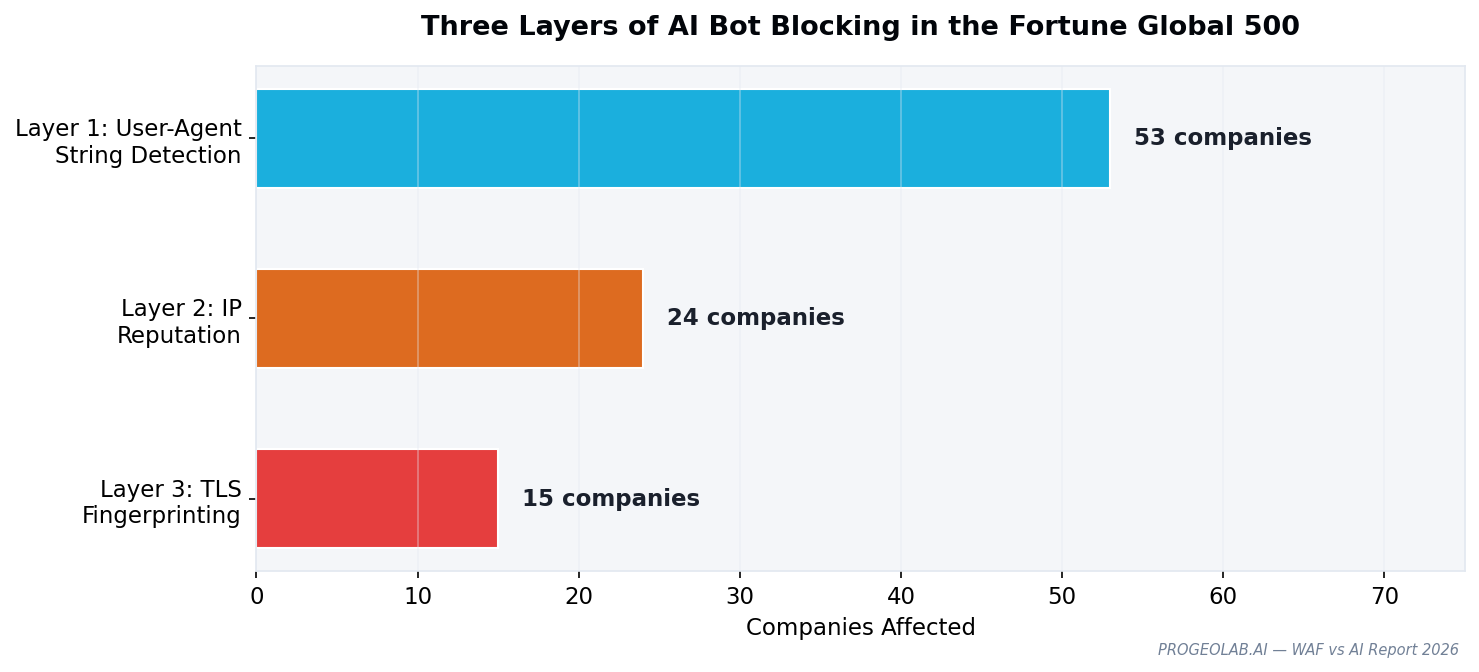

Web Application Firewalls are the invisible infrastructure that determines whether AI crawlers can access enterprise websites. This report presents the first WAF vendor attribution study across the Fortune Global 500, revealing that AI blocking operates at three distinct layers — User-Agent string, IP reputation, and TLS fingerprinting — each controlled by different WAF features and each requiring a different countermeasure to bypass.

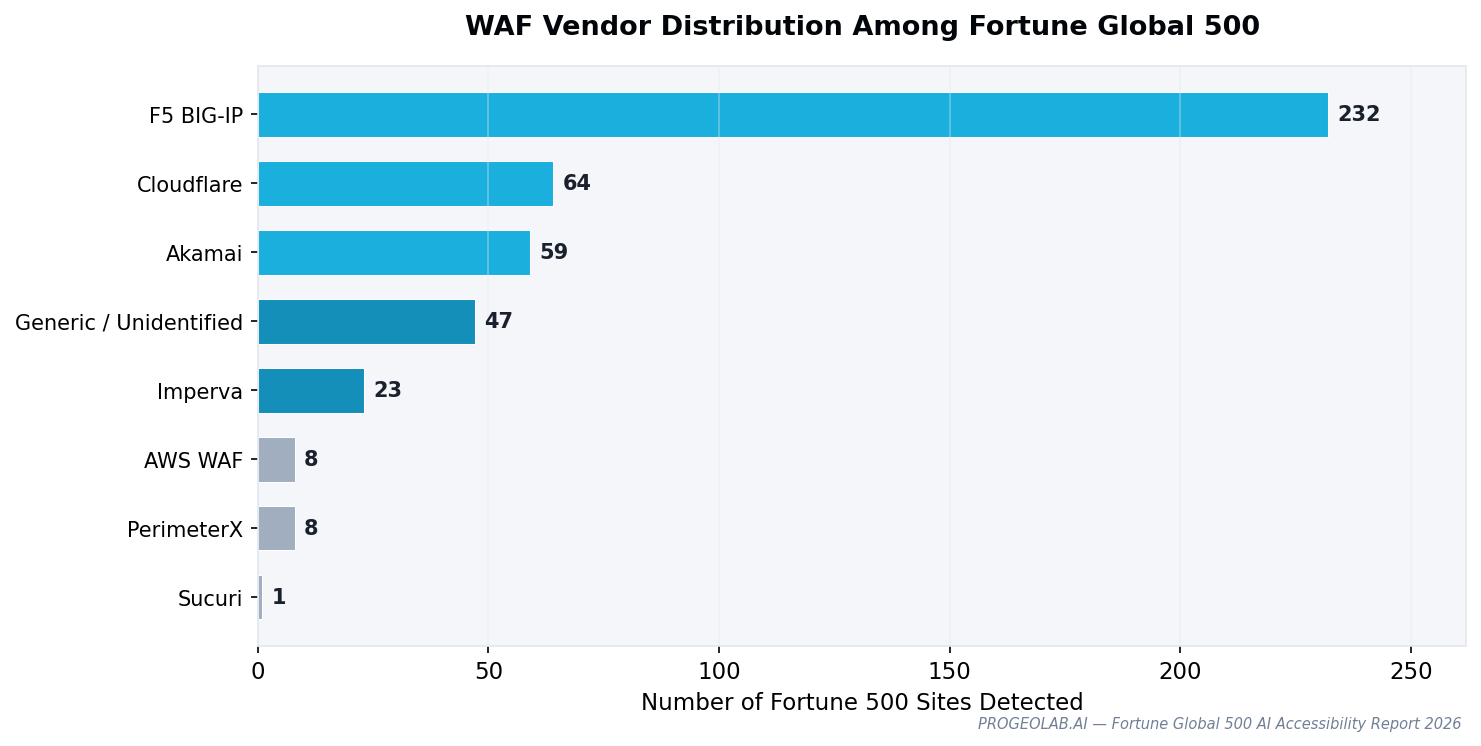

The headline finding is the vendor landscape itself. F5 BIG-IP runs on 232 Fortune 500 sites — 53.6% of the total corpus. That exceeds Cloudflare (64), Akamai (59), and Imperva (23) combined. Public discourse around WAF tends to center Cloudflare because of its visibility in the developer ecosystem; the Fortune 500 tells a different story. F5 is the enterprise default because banks, insurers, and industrial companies have long-standing application-delivery relationships with F5; the WAF functionality is an add-on to existing infrastructure, not a standalone purchase.

The Three Layers

AI blocking is not one technique. Raw response body analysis across the 53 companies in the GEO Visibility Gap reveals three technically distinct layers, each requiring a different response. A company using all three is functionally unreachable; a company using only one can often be accessed by a sufficiently-configured crawler.

Layer 1 — User-Agent string (53 companies)

The request header identifies as ChatGPT-User, and a WAF rule returns 403. Trivially defeated by changing the UA string, but also trivially detected. Most WAF bot-management rules sit here. This is where F5 BIG-IP's default bot-blocklists, Cloudflare's "Likely Automated" rule, and Akamai Bot Manager's category filters operate.

Layer 1 blocking is often unintentional — a WAF rule designed for scrapers catches AI crawlers as collateral damage. The robots.txt vs WAF inconsistency documented elsewhere in this series is largely a Layer 1 phenomenon.

Layer 2 — Datacenter IP reputation (24 companies)

Any traffic from a known cloud or datacenter IP range is blocked, regardless of user agent. This defeats naive UA spoofing because the IP block precedes the UA inspection. Layer 2 blocking is typical of Akamai Bot Manager's "Datacenter" category and F5's IP reputation service.

AI crawlers operate from datacenters by definition. OpenAI publishes its IP ranges precisely so enterprises can whitelist them, but only 3 of the 24 Layer-2-blocking Fortune 500 companies have configured any such exception. The rest maintain blanket datacenter blocks that incidentally block legitimate AI retrieval.

Layer 3 — TLS fingerprinting (15 companies)

The TLS handshake itself reveals whether the client is a real browser or an HTTP library. Cloudflare Bot Management inspects the ClientHello message's JA3/JA4 fingerprint and rejects any fingerprint that doesn't match a known browser. By the time an HTTP request is parsed, the TCP connection has already been reset.

Layer 3 is the hardest to bypass because the fingerprint is set before a single HTTP header is sent. An AI crawler built on Python's httpx or requests generates a JA3 hash that differs from Chrome, Firefox, and Safari. TLS fingerprint forgery is possible (via custom TLS libraries like curl-impersonate), but no production AI crawler implements it — OpenAI, Anthropic, and Perplexity all use standard HTTP libraries and are all Layer-3 blocked at Cloudflare-protected sites.

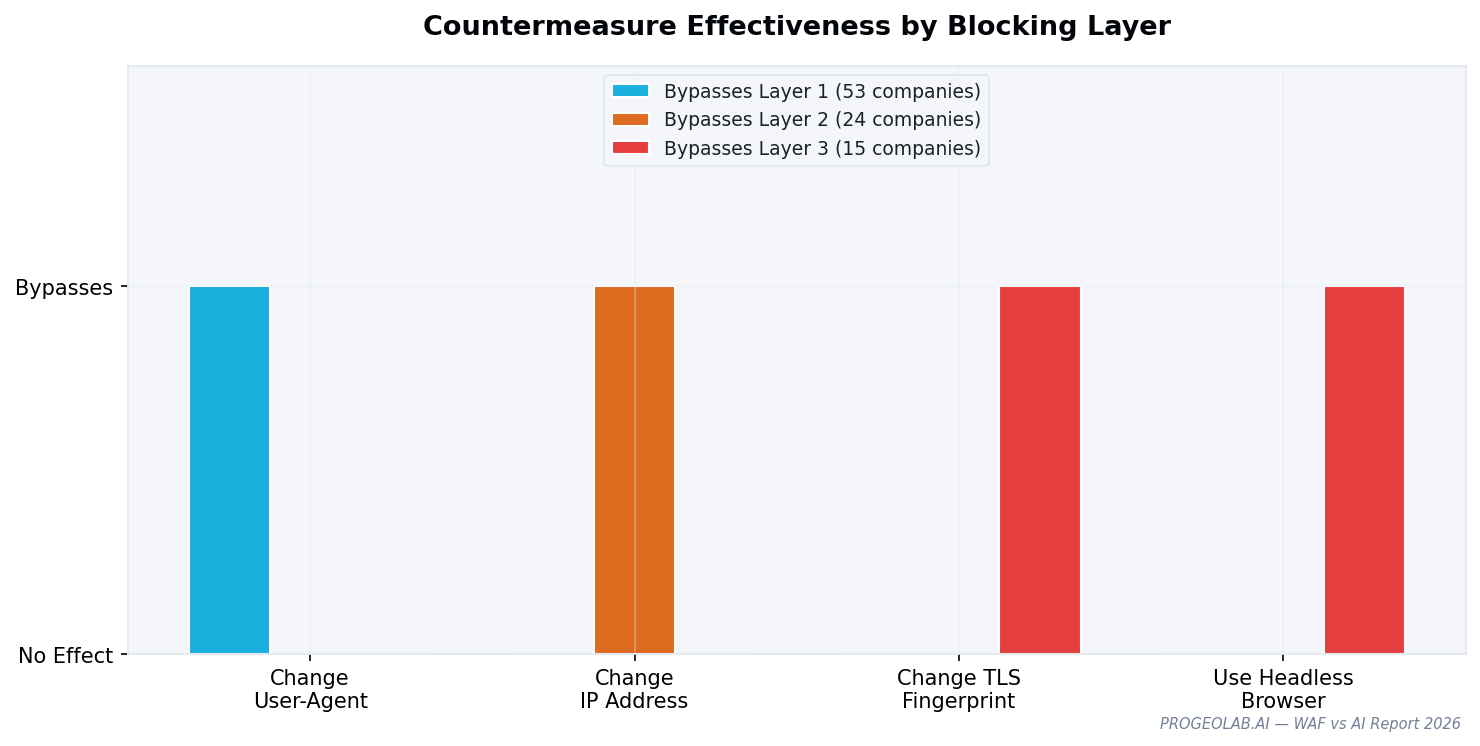

Layers stack. Countermeasures don't.

There is no single fix that restores AI accessibility across all three layers. Whitelisting the ChatGPT-User UA string at the WAF addresses Layer 1 but not Layer 2 or 3. Whitelisting OpenAI's IP ranges addresses Layer 2 but not Layer 1 or 3. And Layer 3 bypasses require vendor-level action at Cloudflare or Akamai — a site's own administrators can't configure around JA3 rejection without changing WAF vendor.

The practical implication for enterprises: restoring AI access is a multi-team project. Content policy (robots.txt, llms.txt) is owned by SEO/marketing. WAF rules are owned by security. Datacenter IP exceptions are owned by network operations. TLS-layer behavior is owned by the WAF vendor itself. Shipping an "AI-friendly" site requires alignment across all four.

No native AI crawler verification

Googlebot can be verified by reverse DNS lookup — resolve the IP, confirm it ends in .googlebot.com, forward-resolve that hostname, confirm it matches the original IP. This makes Googlebot impersonation detectable and lets WAFs trust verified Googlebot requests while filtering spoofs.

No AI crawler has equivalent verification infrastructure integrated into WAF products. GPTBot's reverse DNS resolves to AWS-generic hostnames. ClaudeBot resolves to Google Cloud generic ranges. No major WAF vendor ships a native AI-verification feature.

The absence of verification forces enterprises into all-or-nothing decisions. They cannot reliably say "allow the real GPTBot, block imitators" — so they say either "allow anything claiming to be GPTBot" (and get scraped) or "block GPTBot entirely" (and disappear from AI answers). Implementing reverse DNS verification as Google has done for Googlebot would reduce the friction that drives blanket blocking.

What's in the full report

- Complete WAF vendor attribution per Fortune 500 company

- Signature library: the response-body patterns used to identify each vendor

- Layer-by-layer fingerprint analysis (JA3 hashes for common AI crawlers)

- Cloudflare Bot Management deep-dive: what "Likely Automated" actually means

- Enterprise recommendations — restoring AI access without rearchitecting security

- AI-company recommendations — what OpenAI, Anthropic, and Perplexity could ship to reduce friction